#include <learnmodel.h>

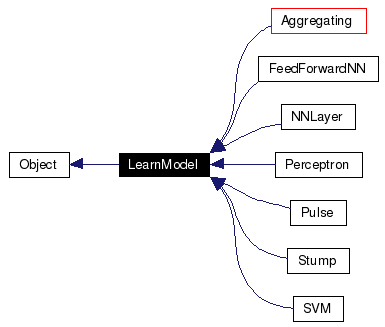

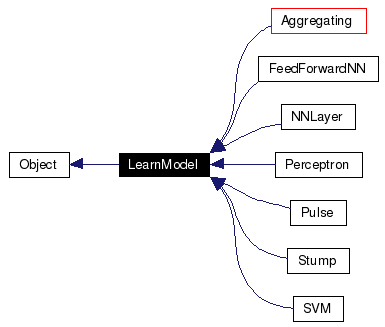

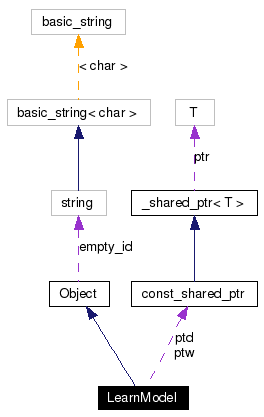

Inheritance diagram for LearnModel:

Public Member Functions | |

| LearnModel (UINT n_in=0, UINT n_out=0) | |

| LearnModel (const LearnModel &) | |

| virtual Output | operator() (const Input &) const =0 |

| virtual Output | get_output (UINT idx) const |

| Get the output of the hypothesis on the idx-th input. | |

| virtual LearnModel * | create () const =0 |

| Create a new object using the default constructor. | |

| virtual LearnModel * | clone () const =0 |

| Create a new object by replicating itself. | |

| UINT | n_input () const |

| UINT | n_output () const |

| void | set_log_file (FILE *f) |

| virtual bool | support_weighted_data () const |

| Whether the learning model/algorithm supports unequally weighted data. | |

| virtual REAL | r_error (const Output &out, const Output &y) const |

| Error measure for regression problems. | |

| virtual REAL | c_error (const Output &out, const Output &y) const |

| Error measure for classification problems. | |

| REAL | train_r_error () const |

| Training error (regression). | |

| REAL | train_c_error () const |

| Training error (classification). | |

| REAL | test_r_error (const pDataSet &) const |

| Test error (regression). | |

| REAL | test_c_error (const pDataSet &) const |

| Test error (classification). | |

| virtual void | initialize () |

| Initialize the model for training. | |

| virtual void | set_train_data (const pDataSet &, const pDataWgt &=0) |

| Set the data set and sample weight to be used in training. | |

| const pDataSet & | train_data () const |

| Return pointer to the embedded training data set. | |

| const pDataWgt & | data_weight () const |

| virtual REAL | train ()=0 |

| Train with preset data set and sample weight. | |

| virtual REAL | margin_norm () const |

| The normalization term for margins. | |

| virtual REAL | margin_of (const Input &x, const Output &y) const |

| Report the (unnormalized) margin of an example (x, y). | |

| virtual REAL | margin (UINT i) const |

| Report the (unnormalized) margin of the example i. | |

| REAL | min_margin () const |

| The minimal (unnormalized) in-sample margin. | |

Protected Member Functions | |

| virtual bool | serialize (std::ostream &, ver_list &) const |

| virtual bool | unserialize (std::istream &, ver_list &, const id_t &=empty_id) |

Protected Attributes | |

| UINT | _n_in |

| dimension of input | |

| UINT | _n_out |

| dimension of output | |

| pDataSet | ptd |

| pointer to the training data set | |

| pDataWgt | ptw |

| pointer to the sample weight (for training) | |

| UINT | n_samples |

equal to ptd->size() | |

| FILE * | logf |

| file to record train/validate error | |

I try to provide + r_error and c_error for regression problems, r_error should be defined; for classification problems, c_error should be defined; these two errors can both be present

The training data is stored with the learning model (as a pointer) Say: why (the benefit of store with, a pointer); maybe not a pointer Say: what's the impact of doing this (what will be changed from normal implementation) Say: wgt: could be NULL if the model doesn't support ...otherwise shoud be a probability vector (randome_sample)...

The flowchart of the learning ...

lm->initialize();lm->set_train_data(sample_data);err = lm->train();y = (*lm)(x);

Do we really need two errors?

Definition at line 64 of file learnmodel.h.

|

||||||||||||

|

Definition at line 70 of file learnmodel.cpp. |

|

|

Definition at line 89 of file learnmodel.cpp. |

|

||||||||||||

|

Error measure for classification problems.

Reimplemented in MultiClass_ECOC. Definition at line 117 of file learnmodel.cpp. References INFINITESIMAL, and LearnModel::n_output(). Referenced by CGBoost::linear_weight(), AdaBoost::linear_weight(), LearnModel::test_c_error(), and LearnModel::train_c_error(). |

|

|

Create a new object by replicating itself.

return new Derived(*this);

Implements Object. Implemented in AdaBoost, AdaBoost_ECOC, Aggregating, Bagging, Boosting, Cascade, CGBoost, FeedForwardNN, LPBoost, MgnBoost, MultiClass_ECOC, NNLayer, Perceptron, Pulse, Stump, and SVM. Referenced by Aggregating::set_base_model(). |

|

|

Create a new object using the default constructor. The code for a derived class Derived is always return new Derived(); Implements Object. Implemented in AdaBoost, AdaBoost_ECOC, Aggregating, Bagging, Boosting, Cascade, CGBoost, FeedForwardNN, LPBoost, MgnBoost, MultiClass_ECOC, NNLayer, Perceptron, Pulse, Stump, and SVM. |

|

|

Definition at line 122 of file learnmodel.h. References LearnModel::ptw. |

|

|

Get the output of the hypothesis on the idx-th input.

Reimplemented in Boosting, and MultiClass_ECOC. Definition at line 136 of file learnmodel.h. References LearnModel::operator()(), LearnModel::ptd, and LearnModel::ptw. Referenced by FeedForwardNN::cost(), lemga::op::inner_product(), CGBoost::linear_weight(), AdaBoost::linear_weight(), LearnModel::train_c_error(), LearnModel::train_r_error(), and AdaBoost_ECOC::train_with_partition(). |

|

|

Initialize the model for training.

Reimplemented in Aggregating, Boosting, CGBoost, FeedForwardNN, MultiClass_ECOC, NNLayer, Perceptron, and SVM. Definition at line 116 of file learnmodel.h. Referenced by Bagging::train(). |

|

|

Report the (unnormalized) margin of the example i.

Reimplemented in Boosting, and MultiClass_ECOC. Definition at line 161 of file learnmodel.h. References LearnModel::margin_of(), LearnModel::ptd, and LearnModel::ptw. Referenced by LearnModel::min_margin(). |

|

|

The normalization term for margins. The margin concept can be normalized or unnormalized. For example, for a perceptron model, the unnormalized margin would be the wegithed sum of the input features, and the normalized margin would be the distance to the hyperplane, and the normalization term is the norm of the hyperplane weight. Since the normalization term is usually a constant, it would be more efficient if it is precomputed instead of being calculated every time when a margin is asked for. The best way is to use a cache. Here I use a easier way: let the users decide when to compute the normalization term. Reimplemented in Boosting, Perceptron, and SVM. Definition at line 155 of file learnmodel.h. |

|

||||||||||||

|

Report the (unnormalized) margin of an example (x, y).

Reimplemented in Boosting, MultiClass_ECOC, Perceptron, and SVM. Definition at line 211 of file learnmodel.cpp. References OBJ_FUNC_UNDEFINED. Referenced by LearnModel::margin(). |

|

|

The minimal (unnormalized) in-sample margin.

Definition at line 215 of file learnmodel.cpp. References INFINITESIMAL, INFINITY, LearnModel::margin(), LearnModel::n_samples, and LearnModel::ptw. |

|

|

Definition at line 82 of file learnmodel.h. References LearnModel::_n_in. Referenced by FeedForwardNN::add_top(), NNLayer::back_propagate(), NNLayer::feed_forward(), SVM::operator()(), Stump::operator()(), Pulse::operator()(), Perceptron::operator()(), FeedForwardNN::operator()(), Aggregating::set_base_model(), Pulse::set_index(), SVM::signed_margin(), SVM::train(), Stump::train(), and Pulse::train(). |

|

|

|

Implemented in Bagging, Boosting, Cascade, FeedForwardNN, MultiClass_ECOC, NNLayer, Perceptron, Pulse, Stump, and SVM. Referenced by LearnModel::get_output(). |

|

||||||||||||

|

Error measure for regression problems.

Definition at line 99 of file learnmodel.cpp. References LearnModel::_n_out, and LearnModel::n_output(). Referenced by FeedForwardNN::_cost(), LearnModel::test_r_error(), and LearnModel::train_r_error(). |

|

||||||||||||

|

Reimplemented in Aggregating, Boosting, Cascade, CGBoost, FeedForwardNN, MultiClass_ECOC, NNLayer, Perceptron, Pulse, Stump, and SVM. Definition at line 74 of file learnmodel.cpp. References LearnModel::_n_in, LearnModel::_n_out, and SERIALIZE_PARENT. |

|

|

Definition at line 85 of file learnmodel.h. References LearnModel::logf. |

|

||||||||||||

|

Set the data set and sample weight to be used in training.

If the learning model/algorithm can only do training using uniform sample weight, i.e., support_weighted_data() returns

In order to make the life easier, when support_weighted_data() returns

Reimplemented in Aggregating, Boosting, and MultiClass_ECOC. Definition at line 170 of file learnmodel.cpp. References EPSILON, LearnModel::n_samples, LearnModel::ptd, LearnModel::ptw, and LearnModel::support_weighted_data(). Referenced by Aggregating::set_train_data(), Bagging::train(), and AdaBoost_ECOC::train_with_partition(). |

|

|

Whether the learning model/algorithm supports unequally weighted data.

Reimplemented in Bagging, Boosting, Cascade, FeedForwardNN, MultiClass_ECOC, Perceptron, Pulse, Stump, and SVM. Definition at line 95 of file learnmodel.h. Referenced by LearnModel::set_train_data(). |

|

|

Test error (classification).

Definition at line 147 of file learnmodel.cpp. References LearnModel::c_error(). |

|

|

Test error (regression).

Definition at line 139 of file learnmodel.cpp. References LearnModel::r_error(). |

|

|

Train with preset data set and sample weight.

Implemented in AdaBoost, Bagging, Boosting, Cascade, CGBoost, FeedForwardNN, LPBoost, MgnBoost, MultiClass_ECOC, NNLayer, Perceptron, Pulse, Stump, and SVM. Referenced by Bagging::train(), and AdaBoost_ECOC::train_with_partition(). |

|

|

Training error (classification).

Definition at line 131 of file learnmodel.cpp. References LearnModel::c_error(), LearnModel::get_output(), LearnModel::n_samples, LearnModel::ptd, and LearnModel::ptw. Referenced by Perceptron::log_error(). |

|

|

Return pointer to the embedded training data set.

Definition at line 121 of file learnmodel.h. References LearnModel::ptd. Referenced by lemga::op::inner_product(). |

|

|

Training error (regression).

Definition at line 123 of file learnmodel.cpp. References LearnModel::get_output(), LearnModel::n_samples, LearnModel::ptd, LearnModel::ptw, and LearnModel::r_error(). |

|

||||||||||||||||

|

Reimplemented in Aggregating, Boosting, Cascade, CGBoost, FeedForwardNN, MultiClass_ECOC, NNLayer, Perceptron, Pulse, Stump, and SVM. Definition at line 80 of file learnmodel.cpp. References LearnModel::_n_in, LearnModel::_n_out, Object::empty_id, and UNSERIALIZE_PARENT. |

|

|

|

|

file to record train/validate error

Definition at line 72 of file learnmodel.h. Referenced by FeedForwardNN::log_cost(), Perceptron::log_error(), and LearnModel::set_log_file(). |

|

|

|

1.4.6

1.4.6